![]()

Why Testing Two Different Ads Against Each Other is the Secret of Every Successful Advertiser

Let me tell you about a disagreement that happens in marketing teams all over the world, every single day.

A team is writing a Google Ad for a new product. Two people have two different ideas for the headline.

Person One says: “Save 40% on Your First Order — Shop Now”

Person Two says: “Join 50,000 Happy Customers — Try Risk Free”

Both are reasonable headlines. Both have genuine logic behind them. Person One believes that a clear discount drives immediate action — people respond to saving money. Person Two believes that social proof is more powerful — people want to know that others have already made this decision and been happy with it.

The debate goes around the table. Everyone has an opinion. The team lead weighs in. Someone mentions what a competitor is doing. Someone else references an article they read about consumer psychology. The conversation becomes circular.

Eventually, a decision is made — not because anyone has proof that one headline works better than the other, but because someone in the room has more seniority, or more confidence, or simply more patience than the others.

The chosen headline runs. It gets results — some results, anyway. But nobody ever knows whether the other headline would have worked better. Maybe it would have generated thirty percent more clicks. Maybe it would have flopped. The question is permanently unanswered because only one option was ever tested.

This scenario plays out thousands of times every day across businesses of every size. And it represents one of the most expensive habits in all of marketing — making advertising decisions based on opinion rather than evidence.

The alternative has a name. It is called A/B testing. Sometimes called split testing. And it is, without exaggeration, the single practice that most consistently separates successful advertisers from those who plateau, wonder why results are mediocre, and keep making the same expensive guesses year after year.

What A/B Testing Actually Is — The Concept Behind the Jargon

The idea behind A/B testing is almost childishly simple. So simple, in fact, that its power is easy to underestimate until you have seen what it can do in practice.

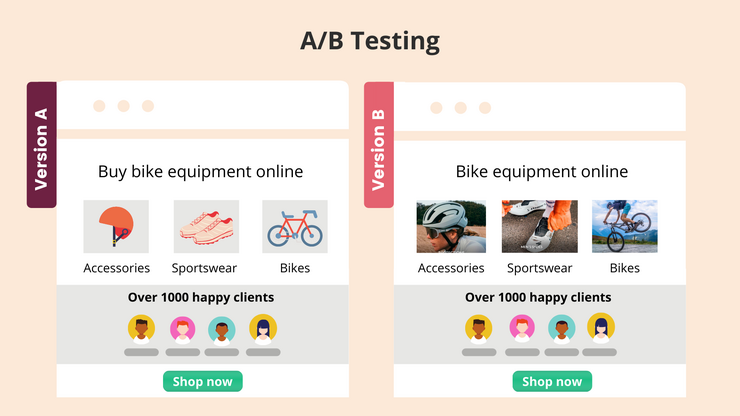

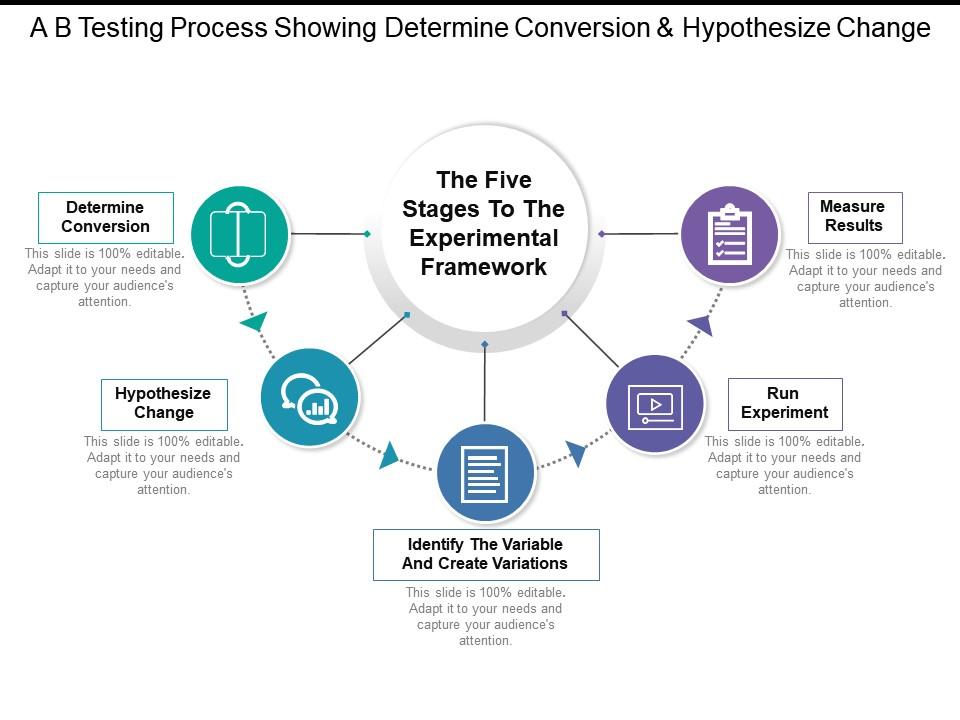

You take two versions of something — an ad, a headline, a landing page, an email subject line, a call to action button. You show Version A to one group of people and Version B to a different group of people. You measure which version produces better results. You keep the winner. You replace the loser with a new challenger. You test again.

That is it. That is A/B testing.

The principle was not invented by digital marketers. It was not invented for advertising at all. Its roots go back to the scientific method — the idea that if you want to know whether one thing works better than another, you test them under controlled conditions rather than relying on intuition or authority.

In medical research, this principle gave us clinical trials. In agriculture, it gave farmers the ability to test which fertiliser or crop variety produced better yields. In manufacturing, it became the foundation of quality control.

In advertising, it became the practice that every consistently successful marketer has quietly relied on for decades — long before digital tools made it easy.

David Ogilvy, widely considered one of the greatest advertising minds of the twentieth century, was obsessive about testing. He wrote extensively about the habit of running ads in different versions to see which outperformed. He was famously unimpressed by advertising that could not be measured and improved — because unmeasured advertising was, in his view, money spent on hope rather than evidence.

What has changed in the digital era is not the principle. It is the ease, the speed, and the precision with which testing can now be done. What once required weeks of running ads in different regional newspaper editions and painstakingly counting coupon responses can now be done in days with digital platforms that automatically split your audience, track every click and conversion, and tell you with statistical confidence which version is winning.

The tool has become extraordinarily powerful. The principle underneath it is timeless.

Why Intuition About Advertising Is Unreliable — The Uncomfortable Truth

Before we go deep into how A/B testing works and how to do it well, we need to address the reason most businesses do not test — and why that reason, however understandable, consistently leads them astray.

The reason is confidence in intuition.

Most business owners and marketers believe, with genuine sincerity, that they understand their customers well enough to know what kind of advertising will work for them. They have been in their industry for years. They know their product intimately. They have conversations with customers regularly. Surely this experience and knowledge translates into reliable judgment about what messages, what offers, what creative approaches will resonate.

It does not. Not reliably. And the evidence for this is overwhelming.

The history of advertising is littered with examples of creative that experts predicted would fail and that became phenomenally successful — and creative that experts predicted would succeed and that flopped completely. Marketing professionals with decades of experience and intimate knowledge of their market routinely get it wrong when they rely on intuition rather than testing.

Why? Several reasons.

First, experts suffer from the curse of knowledge. When you know your product deeply — when you understand every feature, every benefit, every nuance — it is genuinely difficult to see it through the eyes of a customer who is encountering it for the first time with different priorities, different assumptions, and different emotional contexts.

Second, humans are terrible at predicting what will motivate other humans to take action. We think we know what matters to people. But what people say they care about and what actually drives their behaviour are frequently different. The only reliable way to know what motivates your specific customers is to show them different options and observe what they choose.

Third, the most important variable in advertising — the customer’s emotional and cognitive state in the moment of seeing the ad — is invisible to the advertiser. You know what you wrote. You do not know whether the person seeing it is rushed, distracted, sceptical, open, hopeful, or fearful. Their state shapes how they receive your message in ways that no amount of market knowledge can fully predict.

Testing replaces these unreliable intuitive judgments with actual evidence. It asks the question not of experts in a meeting room but of real customers in real moments. And the answers real customers give — by clicking or not clicking, converting or not converting — are the only answers that matter.

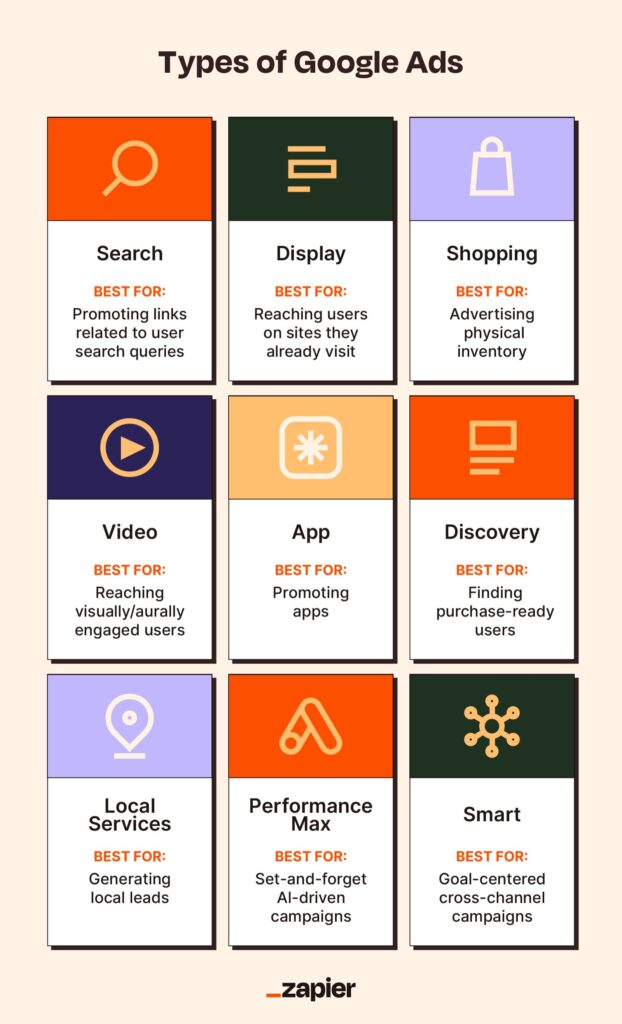

The First Big Test — Headlines

In Google Search Ads, the headline is where the battle is won or lost. As we explored in a previous post, the headline is the first and often only thing a searcher processes before deciding whether to click. The description lines, the extensions, the URL — all of these are secondary to the headline’s job of capturing attention and communicating relevance in the first two to three seconds of scanning.

Because the headline carries so much weight, it is also where the impact of testing is most dramatic. The difference between a mediocre headline and a great one is not ten or fifteen percent. In some cases, it is two hundred, three hundred, even five hundred percent difference in click-through rate.

This is not hyperbole. Split tests on ad headlines regularly reveal that one version dramatically outperforms another — not because the losing version was badly written, but because it framed the offer in a way that resonated slightly less with the specific audience at that specific moment.

Here are the types of headline variations that consistently produce surprising testing results — cases where the winning version was not always the one most people would have predicted.

Specific numbers versus vague claims

“Save Money on Insurance” versus “Save ₹4,200 on Your Annual Insurance Premium”

The specific version almost always wins — by significant margins. The specific number feels real, tangible, and testable in a way that the vague claim does not. But the margin of the win — how much more the specific version outperforms — varies by audience and product in ways that are difficult to predict without testing.

Question format versus statement format

“Lower Your Electricity Bill With Solar” versus “Is Your Electricity Bill Too High?”

The question format sometimes dramatically outperforms the statement — because it pulls the reader in by reflecting their own concern back at them. Sometimes the statement outperforms. The answer depends on the audience, the context, and the product. Testing is the only way to know for your specific situation.

Urgency versus reassurance

“Limited Time — Book Your Slot Now” versus “Book Anytime — No Commitment Required”

Urgency works powerfully for impulsive, low-consideration purchases. Reassurance works powerfully for high-consideration, high-anxiety purchases. But the boundary between these categories is less clear than it seems — and testing often reveals that the audience you thought was impulsive is actually anxious, or vice versa.

Benefit versus feature

“Four-Camera DSLR With 45MP Sensor” versus “Capture Every Detail — Even in Low Light”

The benefit version speaks to what the customer experiences. The feature version speaks to what the product is. For highly knowledgeable audiences who already understand the category, feature-focused headlines can win. For broader audiences, benefit-focused headlines tend to outperform. But again — tendency is not certainty, and testing is the only way to confirm for your specific product and audience.

The Second Big Testing Battleground — Offers and Calls to Action

After headlines, the second most impactful area for A/B testing in Google Ads is the offer structure and the call to action.

What you are asking the customer to do — and how you frame what they will get in exchange — has an enormous influence on whether they take that action. And the framing that works best is rarely obvious in advance.

Consider these pairs of calls to action for the same service:

Pair One: “Call Us for a Free Quote” versus “WhatsApp Us — Reply in 5 Minutes”

One emphasises the free quote. The other emphasises the speed and ease of response. For customers who are primarily concerned about cost, the first might win. For customers who are primarily concerned about convenience and speed, the second might win. Which type of customer dominates your specific audience? Test and find out.

Pair Two: “Book Your Free Consultation Today” versus “Book Your Free 20-Minute Consultation — No Obligation”

The second version adds two specific details — the time commitment and the absence of obligation. These details address anxiety that may be preventing people from booking. Does adding them help, or does the extra detail make the call to action feel cluttered? Test and find out.

Pair Three: “Get 20% Off — First Order Only” versus “Try It Risk Free — Full Refund Guarantee”

Both are incentives. One reduces the upfront financial commitment. The other reduces the perceived risk of a bad decision. For price-sensitive audiences, the discount might dominate. For quality-sensitive audiences worried about whether the product will meet expectations, the guarantee might dominate. Which is your audience? Test and find out.

The pattern in all of these examples is the same. Reasonable people can construct reasonable arguments for why any of these options might win. But the arguments are irrelevant. What matters is what your specific customers, in their specific context, actually respond to. And that question can only be answered by showing them both options and observing which they prefer.

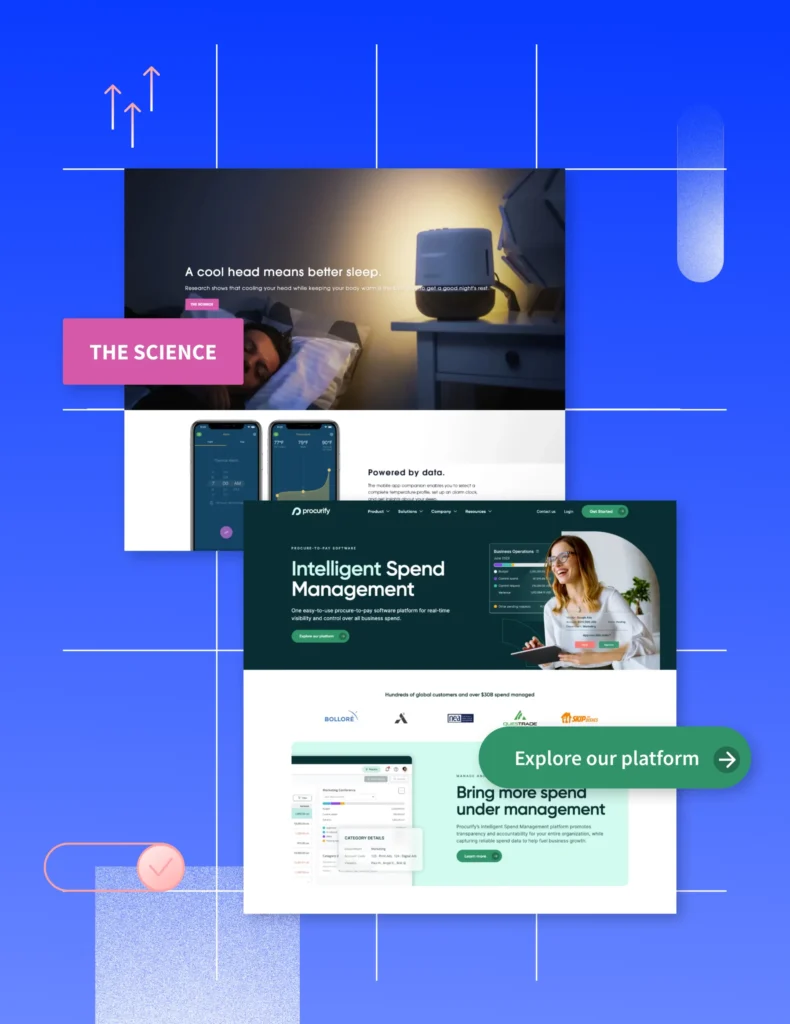

Landing Page Testing — Where the Real Money Is

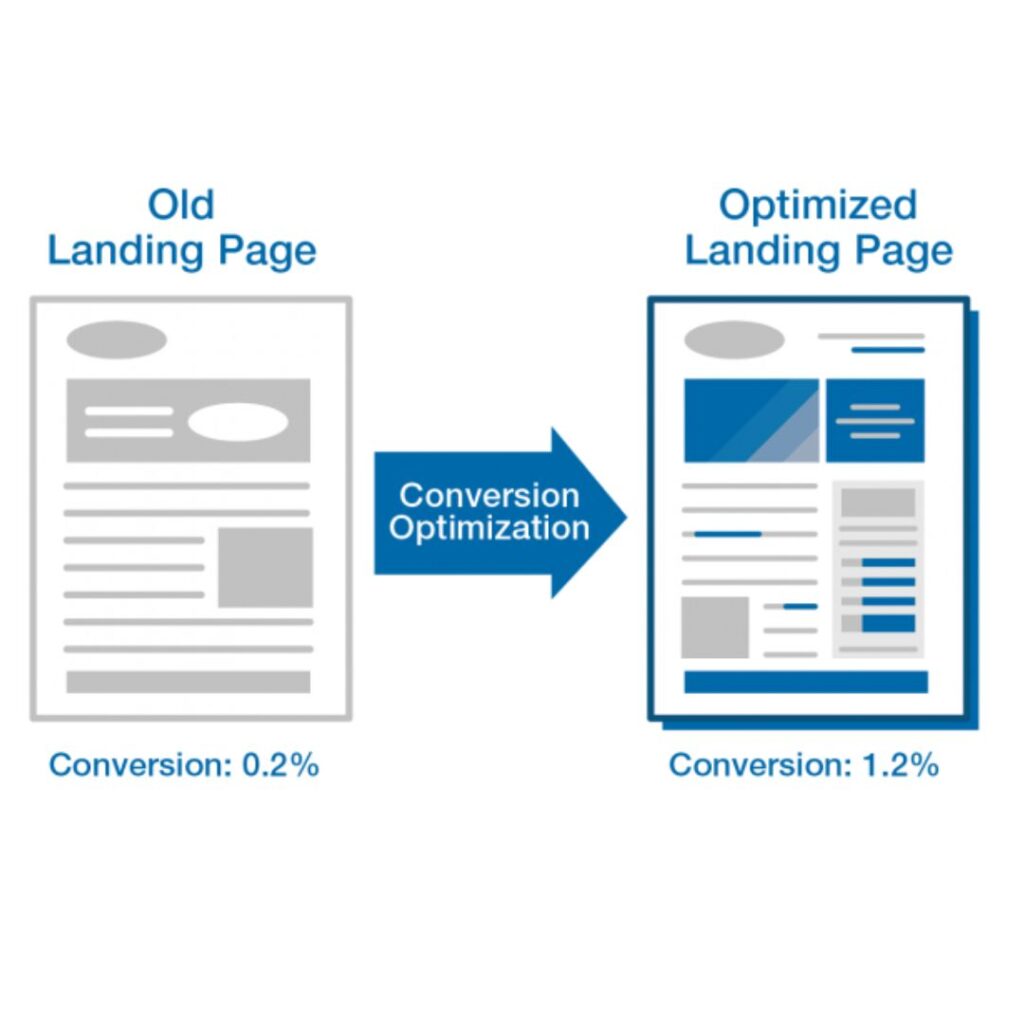

Google Ad copy testing is important. But the testing that often produces the most dramatic improvements in cost per acquisition is landing page testing — testing different versions of the page people land on after clicking your ad.

Here is why. A Google Ad with a three percent click-through rate delivers thirty clicks per thousand impressions. A landing page with a five percent conversion rate converts those thirty clicks into one and a half customers.

Improve the ad click-through rate from three to four percent — a significant improvement — and you get forty clicks, still converting at five percent for two customers.

But improve the landing page conversion rate from five to ten percent — and those same thirty clicks produce three customers. Same ad. Same clicks. Twice the customers.

Landing page conversion rate improvements often have a larger absolute impact on campaign efficiency than ad copy improvements, because the landing page is where the final decision is made. The ad creates the click. The landing page creates the customer.

What should you test on landing pages?

The Headline

The landing page headline is the first thing a visitor sees when they arrive. It needs to immediately confirm that they have found what the ad promised. Testing different headline formulations — different emphases, different problem framings, different benefit statements — can have significant conversion impact.

The Primary Call to Action

What is the main thing you are asking visitors to do? Call? Fill out a form? Book an appointment? And how is that ask presented? The colour of the button, the wording on the button, the placement of the button, the presence or absence of supporting text around it — all of these can be tested and all can meaningfully influence conversion rates.

“Book Your Free Consultation” versus “Yes, I Want to Reduce My Electricity Bill” as button text sounds like a trivial difference. In testing, it often is not.

The Form Length

For lead generation pages, the length and complexity of the enquiry form is one of the most impactful variables. A form asking for name, phone number, and one specific question outperforms a form asking for twelve pieces of information, in almost every test — but the magnitude of the difference, and which fields are truly necessary, requires testing to determine for your specific context.

Social Proof Placement

Reviews, testimonials, customer counts, and case studies can be placed in different positions on a landing page. Near the headline, near the form, in a dedicated section mid-page. Testing different placements reveals where social proof has the most influence on conversion — and the answer is often different from what intuition suggests.

The Lead Image or Video

For product pages, does a product image convert better than a lifestyle image? Does a video increase conversions or does it distract? These questions are genuine and their answers vary by product category and audience in ways that make testing the only reliable way to find the truth.

How to Run an A/B Test Properly — The Methodology That Makes Results Trustworthy

Understanding what to test is only half the challenge. The other half is running tests in a way that produces trustworthy results — results you can act on with genuine confidence rather than results that are misleading because the test was conducted poorly.

Several principles govern well-run A/B tests.

Test One Variable at a Time

This is the most important and most frequently violated principle of A/B testing.

If you change the headline and the call to action and the hero image simultaneously between Version A and Version B — and Version B performs better — you do not know which change caused the improvement. Maybe it was the headline. Maybe it was the image. Maybe it was the call to action. Maybe it was some interaction between all three.

Testing multiple variables simultaneously means you cannot isolate cause and effect. You know that the package of changes worked better — but you do not know what to carry forward, what to test further, or what principle to apply to future decisions.

Test one variable at a time. If you want to test three things, run three sequential tests. Each test teaches you something specific and actionable. Three simultaneous changes teach you a confused aggregate that you cannot interpret clearly.

The exception to this rule is multivariate testing — a more sophisticated methodology that can test multiple variables simultaneously using statistical techniques that isolate each variable’s contribution. But multivariate testing requires much larger traffic volumes and more complex analysis than simple A/B testing. For most businesses, sequential single-variable tests are more practical and produce equally valuable learning.

Run the Test Long Enough to Reach Statistical Significance

This is the second most frequently violated principle — and violating it is responsible for a huge proportion of misleading test results.

Statistical significance is a measure of how confident you can be that the difference in performance between two versions is a real difference caused by the variable you changed, rather than random variation. If you run a test for two days and Version B gets twenty percent more clicks than Version A, that could mean Version B is genuinely better — or it could mean you happened to run Version B on a Tuesday and Wednesday when your particular audience is slightly more active.

To distinguish genuine improvement from random noise, you need to run the test long enough and with enough data to reach statistical confidence. The typical threshold is ninety-five percent confidence — meaning there is only a five percent probability that the observed difference is due to chance.

The amount of data required to reach this threshold depends on the size of the difference between the two versions and the volume of traffic you are working with. A large difference — one version getting double the conversions of another — becomes statistically significant with less data than a small difference of ten or fifteen percent.

As a practical guide: most meaningful tests should run for a minimum of two weeks and should accumulate at least one hundred conversions per variant before being evaluated. Shorter tests and smaller samples produce misleading results — and acting on misleading results is worse than not testing at all, because it gives false confidence in the wrong conclusion.

Split Traffic Randomly and Equally

For a test to be valid, the two audiences seeing your different versions need to be essentially identical in their characteristics — the same mix of demographics, search behaviors, device types, and time-of-day patterns. If Version A runs on weekdays and Version B runs on weekends, any difference in performance could be explained by the different audience composition rather than by the creative difference.

Google Ads handles this automatically when you set up ad variations correctly — it distributes impressions between variants randomly and simultaneously. But if you are manually managing a test — running Version A for two weeks and then switching to Version B for two weeks — seasonal variation, external events, and audience changes over time can contaminate your results.

Whenever possible, run both versions simultaneously with random traffic distribution. Do not run sequential tests unless you have no alternative — and if you do run sequential tests, interpret the results with appropriate caution.

Document Everything

Every test you run is a piece of learning — not just about which version won, but about why it might have won and what that tells you about your audience. But this learning is only accessible if you have a record of what you tested, when you tested it, what the results were, and what hypothesis you were testing.

Build a simple testing log — a spreadsheet or document that records every test with these details. Over time, this log becomes an invaluable asset — a documented understanding of your audience that accumulates with each test and informs increasingly sophisticated future tests.

A business that has been testing systematically for two years has something genuinely valuable: a body of evidence about what their specific customers respond to, built from hundreds of real interactions rather than theoretical assumptions. This knowledge is a competitive advantage that is difficult for a competitor to quickly replicate.

The Testing Mindset — What Separates Testing Cultures From Opinion Cultures

The businesses that extract the most value from A/B testing are not necessarily the ones with the largest budgets or the most sophisticated tools. They are the ones that have built what might be called a testing culture — an organisational habit of making decisions based on evidence rather than opinion.

In a testing culture, nobody wins an argument by being senior or confident. They win it by having evidence. “I think the discount headline will perform better” is a hypothesis to be tested, not a conclusion to be accepted. “We tested both versions for three weeks and the discount headline had a thirty percent higher click-through rate” is evidence to be acted on.

This sounds straightforward. In practice, it requires genuine intellectual humility — the willingness to be wrong about your own ideas, to have your favourite creative concept outperformed by a simple alternative you initially dismissed, and to let the data override your instinct even when the data tells you something uncomfortable.

The marketers and business owners who build this habit consistently outperform those who rely on opinion — not because they are smarter, not because they have better creative instincts, but because they have a process for learning that removes their blind spots over time.

Every test you run makes you a slightly more accurate judge of what works for your specific customers. After fifty tests, your intuition about your audience — now calibrated by fifty rounds of real evidence — is genuinely valuable. Before you have done that testing, your intuition is no better than a well-educated guess.

Common Testing Mistakes — What to Avoid

Like any practice, A/B testing can be done poorly in ways that produce misleading results or waste resources. Here are the most common mistakes.

Stopping the Test Early When a Version Appears to Be Winning

This is called peeking — checking results before the test has run long enough and stopping it as soon as one version looks better. It is one of the most seductive mistakes in testing because it feels like good management — you are responding quickly to what the data is showing.

The problem is that early results are dominated by random variation. In the first few days of a test, one version will often appear to be performing better simply by chance. If you stop the test at this point, you will frequently choose the wrong winner — and then run your campaigns based on a false conclusion.

Run the test to completion. Resist the urge to peek. Trust the process.

Testing Insignificant Variables

Not all variables are worth testing. The colour of a subheading three scrolls down a landing page is unlikely to meaningfully impact conversion rates. Testing it is not wrong exactly — but it is a poor use of your testing resource compared to testing variables with genuine leverage, like headlines, offers, and primary calls to action.

Prioritise your testing calendar around variables that are most likely to produce meaningful improvements. The highest-impact variables are almost always: headlines, offers, primary calls to action, form length, and hero images or videos.

Drawing Universal Conclusions From Specific Tests

A test result is valid for the specific audience, the specific time period, and the specific context in which it was conducted. It does not necessarily generalise.

If a test of two headlines in Delhi during October shows that a discount-focused headline outperforms a social proof headline — that result tells you something useful about your Delhi audience in October. It does not necessarily tell you that discount headlines always outperform social proof headlines for all audiences in all contexts.

Be careful about generalising testing conclusions too broadly. Use each test to make specific decisions and to generate hypotheses for further testing. Do not treat a single test result as a universal truth.

Testing Without a Hypothesis

The most valuable tests are designed to answer a specific question — “Does naming the specific problem in the headline increase click-through rate compared to naming the solution?” — rather than just running two random variations to see which happens to perform better.

Testing with a hypothesis means that regardless of which version wins, you learn something about the principle behind it. If your hypothesis is confirmed — great, you know something useful about your audience. If it is rejected — the losing version wins — that is equally valuable learning, because it tells you that your audience does not respond to the principle you expected them to respond to.

Design tests with hypotheses. Record the hypothesis before you run the test. Evaluate the result against the hypothesis after. This discipline transforms testing from a mechanical comparison into a genuine learning process.

The Compounding Effect of Continuous Testing

Here is the perspective on A/B testing that makes its importance fully visible — the compounding effect over time.

Imagine a business running Google Ads with a baseline conversion rate of three percent. They run one A/B test per month. Each test produces an average improvement of ten percent in whatever metric they are testing — some tests improve things more, some tests produce no improvement or even a slight decline, but the average impact is ten percent.

After twelve months of continuous testing — one test per month, ten percent average improvement per test — the compounding mathematics produce a result that is dramatically better than the starting point.

Month one: three percent conversion rate Month three: three point six percent Month six: four point seven percent Month twelve: nine point four percent

The conversion rate has more than tripled in twelve months — not through a single dramatic intervention, not through a budget increase, not through a strategic overhaul. Through twelve small, disciplined, evidence-based improvements.

This compounding effect is the real power of systematic testing. Each individual test produces a modest improvement. But modest improvements compound. And over months and years, a business that tests consistently and applies its learnings rigorously develops a competitive advantage that is extraordinarily difficult for competitors who rely on intuition to match.

The best advertisers in every industry — the direct response marketers who have been running and testing campaigns for decades, the digital-native brands that have grown from nothing to market leadership — have not succeeded because they had better initial instincts than their competitors. They have succeeded because they built a testing machine that continuously refined their understanding of their customers and their markets, one experiment at a time.

Getting Started — Your First A/B Test This Week

If you are running Google Ads and have never run a systematic A/B test, here is the simplest possible way to start.

Go into your Google Ads account. Find your best-performing campaign. Look at the ads in that campaign. If you have only one ad in an ad group, you are not testing — you are guessing.

Write a second version of your best headline. Not a completely different ad — just a different headline that tests one specific variable. If your current headline leads with a discount — write a version that leads with social proof. If your current headline leads with a question — write a version that leads with a statement. If your current headline names the product — write a version that names the problem the product solves.

Upload the second version as a new ad in the same ad group. Make sure your ad rotation settings are set to “Rotate evenly” so Google shows both versions with equal frequency rather than automatically favouring one.

Wait three weeks. Collect data. Look at the click-through rate and conversion rate for each version. Identify the winner with appropriate caution about statistical significance given your traffic volume.

Keep the winner. Retire the loser. Write a new challenger. Test again.

That is it. That is how it starts. Simple, low-cost, immediately actionable.

The first test will teach you something. The second will teach you more. By the twentieth, you will have an understanding of your specific audience — what language they respond to, what offers motivate them, what fears they want addressed, what reassurances they need — that no amount of experience or intuition could have given you as reliably.

The Closing Truth — Opinions Are Free. Evidence Is Priceless.

Return to that meeting room at the beginning of this post. Two people with two different headlines. A room full of opinions. A decision made based on seniority rather than evidence.

That scene plays out every day in businesses that are, in a very real sense, flying blind — making expensive creative and strategic decisions based on what the most confident person in the room believes, rather than what their actual customers have demonstrated they respond to.

The alternative is not complicated. It is not expensive. It is not reserved for large companies with big data teams and sophisticated analytics infrastructure.

It is two versions of an ad, shown to real customers, with the results measured honestly. The winner kept. The loser replaced. The process repeated.

Person One’s discount headline and Person Two’s social proof headline were never in competition with each other. They were both hypotheses — reasonable, well-intentioned guesses about what customers might respond to.

The only way to find out which hypothesis was right was to ask the customers.

A/B testing is how you ask them.

Every successful advertiser knows this. Now you do too.

Written by Digital Drolia — helping businesses stop guessing and start growing through evidence-based advertising strategies. Found this valuable? Share it with a business owner or marketer who is still making advertising decisions in a meeting room instead of a testing environment.